Deployment pipeline: let’s do it in the cloud!

How can automation, public cloud and AWS and Azure tools help us build a deployment pipeline?

Kamil Kovář

I could start with the old song about how constant change pushes the business to overdevelop, which forces us to constantly release software. I’m not denying that. But we want to automate the entire process from code save to release to production for two other reasons, where the shirt is closer than the coat. For one thing, deploying repeatedly by hand is tedious work with lots of human error. And then, the amount of software is growing exponentially with digitization and the division into microservices. Therefore, we have no choice but to automate all non-creative development activities. How can the public cloud help us in building a deployment pipeline?

In the software development environment, we call a deployment pipeline a system for automatically processing new versioned code from the repository to non-production and production environments.

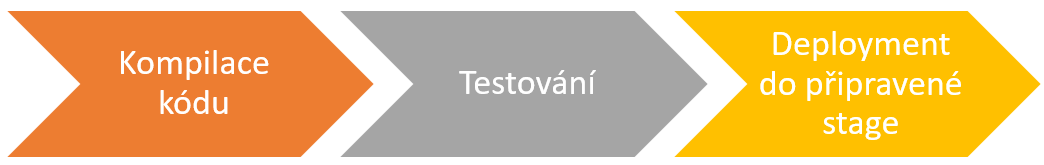

We usually refer to the basic steps from writing new code to running it by the broad term build (but in some areas we narrow this meaning significantly to just building an image). These steps are basically three:

To be sure, if software development is not your daily bread, let’s talk about what each step represents.

Compilers

The developer prepares the program code in a development tool (such as Eclipse or Visual Studio), which helps him to create the code logic quickly and flawlessly. The code is deposited into a repository (e.g. GitHub, Bitbucket) where the code is versioned, combined with the work of other developers, deposited into the appropriate development branch, linked to the requested change, etc.

The code itself describes what the software is supposed to do nicely in the language of your choice, but it needs to be translated into instructions that the processor can take over and execute. Therefore, the code needs to be compiled. This has been an automated process for decades, where a module of a development tool called a compiler compiles the code and creates a package of executables, libraries, data structures, etc. The package is stored in the repository as another artifact.

This includes the creation of a container image, which puts the compiled package together with the necessary software structure and components of the operating system for which the image is intended. The image is stored in a repository (Docker Hub, jFrog Artifactory, AWS ECR, Azure Container Repository, etc.).

Testing

A large number of tests can be performed on the software at different stages of development – some automated, some semi-automatedand some manual. The more frequent releases I require, the more automated tests that someone has to laboriously prepare. Among the well-known tools for automated testing, let’s mention Selenium.

Before compiling, the code can betested for quality and safety with tools such as SonarQube, so called. continuous code inspection. Conversely, after a package or containment is created, a comprehensive vulnerability scan of the package can be performed, such as with the Qualys scanner integrated into the Azure Security Center over containers located in the Azure Container Registry.

I use unit tests to automatically check individual program modules, integration tests to check that the program is able to transfer data between components, and functional tests to verify that the software works as specified.

Next, after deployment to the environment/stage, we talk about end-to-end testing from the perspective of the user and the entire environment, acceptance teststo formalize the possibility of moving into production, performance tests to verify the speed of the application in a given environment, and penetration tests to determine whether the application environment is not vulnerable to attack.

Deployment

The application environment consists of multiple components, virtual servers, containers, frameworks, databases and network elements. The application environment consists of multiple components, virtual servers, containers, frameworks, databases and network elements.

Deployment means placing the compiled software package in the target location in the filesystem, performing the necessary configurations on the environment, and, if necessary, integrating it with surrounding systems. This can be done manually on individual machines using prepared scripts that we run manually, or by automation that allows the entire deployment to be performed in a controlled manner from a central control element. Let’s name the most famous tools Jenkins or Teamcity.

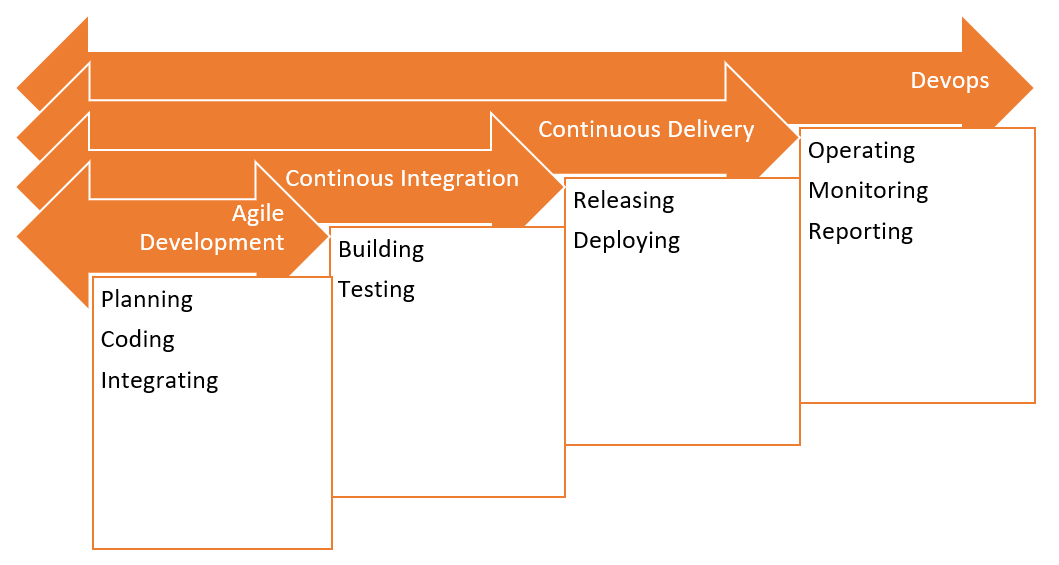

Automatic deployment pipeline

When we talk about an automatic deployment pipeline, we mean automatically performing all three of the above steps (and usually much more). As mentioned, we need tools that allow us to do this automatically:

- Source Code Control

- Compiling code and preparing images

- Testing the release

- Configure the environment

- Monitoring the entire process

The objectives are:

- continuous integration – the ability to integrate code changes from multiple developers into a common package; not to be confused with the need for continuous deployment to maintain the ability to continuously integrate with other applications;

- continuous delivery – after making changes to the code and after manual approval, I am able to automatically deploy different versions of the code to different environments, including production; deployment is started manually at the appropriate time within the release management processes;

- or continuous deployment – includes complete automatic deployment from code tests, unit tests to the deployment itself and any additional functional and non-functional tests on the deployed software in a given environment (“one-click”).

Maybe you are already allergic to such “continuous” terms and I am not surprised. Also, the word agile makes one feel as if someone is constantly repeating, “…blood, blood, blood…“. However, it will be used only once in this text. Many companies feed on trends and hypes, inventing and reutilizing modern concepts. But that doesn’t change the fact that with the right tools and processes, teamwork on software is more effective.

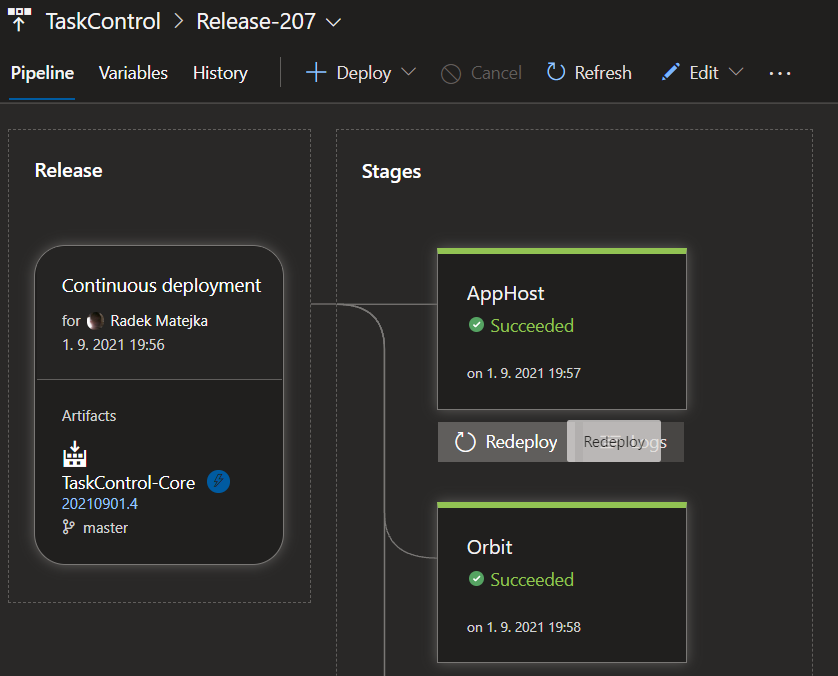

Situation A: We already have a pipeline

In a large number of companies, there is already a pipeline for new projects that is at least partially exposed, which can typically look like this:

The parts of testing and security are usually the most underestimated or bypassed here, often for the simple reason of cost and time constraints in development.

Deploying even such a simple pipeline means collaboration between development and operations teams, with security and, of course, business involved. It requires expertise, experience and often weeks of work. In large environments, an entire team of specialists is dedicated to the operation and development of deployment pipelines.

What will it look like in the public cloud?

If we already have experience with automation in the company, the transition to cloud services (as we support customers in ORBIT) usually takes two paths:

The path of least resistance

If a company is starting to leverage cloud resources, has a working pipeline already built, and sees the cloud as another flexible datacenter where it draws on IaaS, PaaS, and container resources, it may very well be usingan existing setup. Common tools such as the aforementioned Jenkins include plug-ins for both Azure and AWS resources. It is therefore possible to use the public cloud as an additional platform for further stages.

Native tools path

If the public cloud will be the majority or exclusive platform for the company (most of the migration from on-premises is taking place), it is advantageous to use the tools of cloud providers. Microsoft and Amazon have a different approach here.

Microsoft has been building its development and CI/CD tools for a long time, evolving from Team Foundation Server to Azure DevOps, which can be deployed and used both in the cloud and on-premise.

AWS DevOps tools are separate (unlike Azure DevOps) and only some of them can be used.

Let’s briefly introduce the offer of both providers. We won’t compare, but recall that both cloud platforms provide tools that can control the cloud resources of all major public providers and thus offer the possibility of deployment in a multi-cloud environment. (Read more about DevOps in this article.)

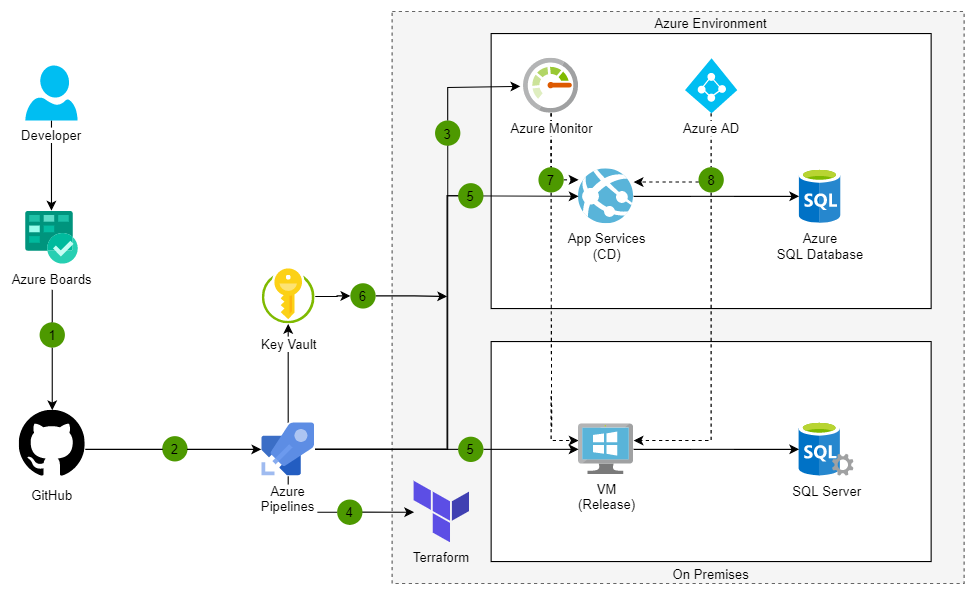

Microsoft Azure DevOps

Azure DevOps exists as Server (for on-premise installation) and as Services (as a service in Microsoft Azure, but “self-hosted” outside of SaaS). It is originally based on the Team Foundation Server a Visual Studio Team System products connected to the MS Visual Studio (development tool). The current cloud product is tailored to work with both MS Visual Studioand Eclipse, uses Gitand as the main repository, and includes tools for the entire DevOps cycle.

It consists of five modules:

Azure Boards

- for the planning of development work in Kanbanstyle

Azure Pipelines

- for building, testing and deployment in conjunction with GitHub

- supports Node.js, Python, Java, PHP, Ruby, C/C++ and of course .NET

- works with Docker Hub and Azure Container Registry and can deploy to K8S environment

Azure Repos

- hosted Git repository

Azure Test Plans

- support for testing in conjunction with Stories from Azure Boards

Azure Artifacts

- a tool for sharing artifacts and packages in teams

Azure DevOps tools are connected to each other in a single ecosystem and you can start using any module.

The result can be, for example, this simple architecture for hybrid web application deployment using Terraform:

With limits on the number of users and parallel pipelines, it is possible to use the tools for free to a limited extent (five users, do 2 GB v Artifacts), further in the plans Basic (without Test Plans) at five dollars a month per user, along with Test Plans (almost ten times the cost of the plan, but testing is testing).

Recently I was interested in a talk on the topic of using Azure DevOps not only for software development for internal and external customers, but also for IT people who script and need to maintain their scripts, version them, run them synchronously, etc. So every IT admin is a developer who can make good use of Azure DevOps. Good idea.

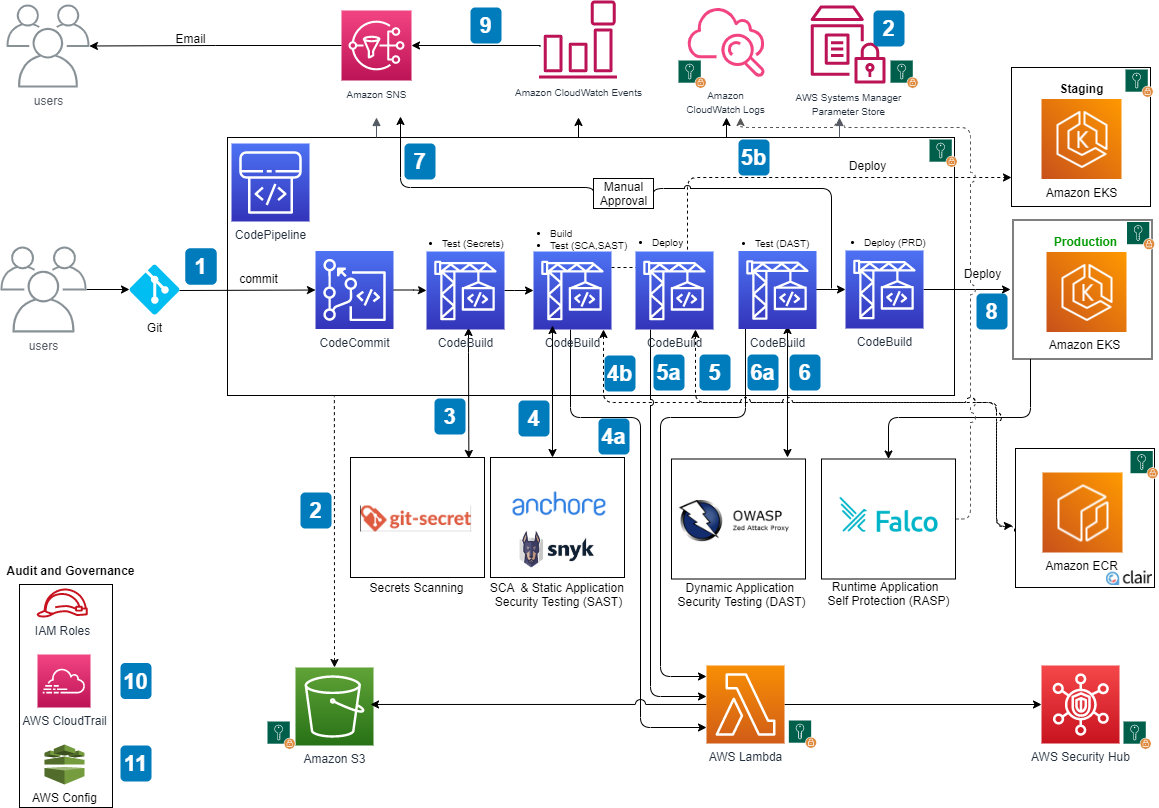

AWS continous deployment tools

Amazon provides a number of proprietary and integrated third-party tools from which a complete automated deployment pipeline can be easily assembled.

AWS CodeArtifact

- safe storage of artefacts

- payments for the volume of data stored

AWS CodeBuild

- a service that compiles source code, performs tests and builds software packages

- payments for build time

Amazon CodeGuru

- machine learning system that finds bugs and vulnerabilities directly in the code (supports Java and Python)

- payments by number of lines of code

AWS CodeCommit

- Git-based source code sharing space

- up to five users for free

AWS CodeDeploy

- automation of deployment to EC2, Fargate(serverless containers) and Lambda services, plus management of on-prem servers

- at no charge except for changes to on-prem servers and payment for resources usedB

AWS CodePipeline

- visualization and automation of various stages in the sw release process

- payment of about a dollar for each active pipeline

The use of AWS Cloudformation is added to perform deployment including infrastructure as a code. Individual steps can be triggered for execution using AWS Lambda. The Amazon ECR registry andthe Amazon EKS Kubernetessystem itself are integrated into the containerization ecosystem. Another useful service is e.g. Amazon ECR image scanning to identify vulnerabilities in container images.

For example, the result might look like this for Amazon’s DevSecOps deployment pipeline:

Situation B: What if I start on a green field?

Getting started with automation without previous experience means having to navigate the current range of tools and choose the right mix for your own needs. Without experience, however, it’s hard to determine what you’ll need throughout the pipeline, and there are usually two extremes:

- a safe bet and the choice of a complete commercial solutionin full equipment at full price,

- gradual and tediousconstruction one component at a time with unstable results.

Neither approach is optimal in terms of financial and time investment.

Let’s start in the cloud

Cloud tools offer a better option here. They include the usual price and functional flexibility with the ability to easily try out the tools, including full integration. They are built for immediate use, require no investment and are easily scalable. Therefore, it is a reasonable compromise between the two extremes mentioned above. It is possible to start small and gradually build a large solution to meet the real needs of development and operations teams.

So if you are starting from scratch in the cloud or on-premise, I recommend building your deployment pipeline on SaaS services provided in the cloud. You get updated, tested, integrated and secured tools at a predictable cost.

And is this the DevOps?

In this article I tried to describe the needs and tools for building a pipeline from a technical point of view. We discuss the Dev/Sec/Ops approach itself and our view of how it should be implementedin this article in our Encyclopedia of the Cloud: a quick guide to the cloud series.

I’d be glad if you could let me know what your pipeline looks like. Let us know what your cloud preference is – will you stick with the tools you’re used to? Do you prefer the AWS toolset? Or do you have a good experience with Azure DevOps?

This is a machine translation. Please excuse any possible errors.