Fault injection or break it yourself!

For a long time, we said, “If it works, don’t touch it!” It’s simply better not to touch running technology, lest our road to failure be paved with good intentions. But in recent years we have a new approach – let’s “break” our own systems on an ongoing basis so that we can handle real incidents later. Isn’t that nonsense? If not, how to do it? And how does cloud technology help us do that?

Kamil Kovář

The idea of purposefully generating outages and errors on infrastructure is not new or foreign. Every large company that provides IT services at a professional level performs regular disaster recovery tests (for the automation of which we have developed a tool called TaskControl – Automation of real-time coordination of activities).

The company is therefore testing the outage on a large scale, i.e. a macroscopic approach. It turns off one large part of the infrastructure, typically systems in one datacenter, in a controlled manner and turns on all services in an alternate location.

I’m sure you also run DR tests in your company and you can rely on the robustness of the environment, right?

Targeted outages on a small scale, i.e. a microscopic approach, is a relatively new issue and has its origins in the first decade of this century. In the same way that we build systems with data centre fault tolerance, we build them with fault tolerance for smaller units, a virtual server, a container or an individual service. We use technologies for failover or blackout-free restart of services.

Infrastructure accessibility

We have historically addressed robustness:

- infrastructure at the datacentre level (dual power supplies, network cooling, control, etc.),

- at the hardware level (redundant power supply, internal cooling, disk RAID, etc.),

- at the virtualization (OS or container) level.

When providing cloud services, all of these IT components are the responsibility of the cloud provider. Despite all the redundancy, we get a relatively low availability guarantee.

What does Amazon have to say about this? AWS will use commercially reasonable efforts to make Amazon EC2 and Amazon EBS … of at least 99.99%, in each case during any monthly billing cycle (the “Service Commitment”).

Microsoft guarantees 99.9% network availability for individual virtual machines on the Service Level Agreements Summary | Microsoft Azure.

For details on infrastructure services in the cloud, I recommend the article by my colleague Jakub Procházka (6) Building the Basics: Infrastructure as a Service | LinkedIn.

( ORBIT is also preparing a December special on high availability in the cloud for more details. To make sure you don’t miss it, just follow the company’s LinkedIn profile.)

Software availability

The ideal way to achieve truly high availability is not to rely on infrastructure availability (although for less critical applications this approach is sufficient), as long as 99.9% of the target SLA is guaranteed. Even there, of course, I have to take care of backups and how to restore services.

We therefore continue to achieve high availability at the software level for critical applications. Primarily on the middleware (in the sense of cluster technologies at the application platform or database level) or in the code of the software solution itself.

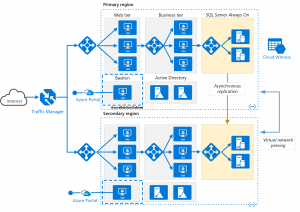

The goal is for the software to enable:

- redirecting or decomposing queries on the integration, user, or data interface, typically by load balancer services or network services,

- similar distribution of the load of calculations and transformations on the application layer,

- redirection within active/passive clusters or active/active clusters at the database layer.

For example, we may get a sympathetic architecture like this:

These functionalities are supported by native cloud services such as scalesets, application gateways and load balancers, highly available databases and more.

An important condition is the ability of the softwareto work in a load balancing configuration, i.e. be configured for multiple redundant components performing the same operation.

Some layers may use a stateless module to handle the incoming request regardless of the historical progress of the session (i.e. communication with the counterparty). Such a component does not retain any data and can be removed at any time.

Other times we have to take into account some loss of session continuity if the module is stateful and maintains a running transaction in temporary memory.

It is generally recommended to keep sessions in a fast distributed storage such as AWS Redis or Azure Cache for Redis.

User experience on a classic example

Let’s imagine a situation where I order goods in an e-shop and recalculate the price in the shopping cart after adding another item. I only notice the sudden crash of a container with a stateless component to calculate the total price in such a way that the action lasted not one second but three, because the calculation call had to be repeated against another container. I’m carrying on with my shopping as normal.

When selecting the method and place of delivery, the user interface may still show a blank selection after the selection is made. This time the state component, which was responsible for site selection, map base and list of subscription points, failed. Instead of selecting a delivery box in our village, the API returned an error, which as a user I will not know about. I go back to selecting the subscription point again and another instance of the component now takes me through the same steps with only a slight loss of user experience.

How not to test on customers

Here we come to the essence of why we use fault injection within the field of chaos engineering. We always perform functional and acceptance tests to make sure that the application executes the written code correctly.

However, we are not used to testing crashes of individual redundant components, i.e. whether the behaviour of the application is still in line with the expected UX (user experience). We actually do this with a great deal of uncertainty in production environments down to the customers of the service.

That attitude is costing us:

- Reputational risk if redundancy is poor and UX is very poor. This is a very common case known among others. from supplies to the public administration.

- Unnecessary cost because we don’t know how to set the target robustness. There is a risk that the individual application layers are set unevenly and unnecessarily generously. In cloud services, robustness is usually configurable with a few lines of code that result in several orders of magnitude higher cost. You can read more about cloud costs here.

Fault injection a chaos engineering

The field of insertion of bugs is not limited to IT infrastructure, but also concerns software development where it is possible to deliberately distort certain parts of the code (products such as ExhaustIF and Holodeck). It is also used in other disciplines, including physical manufacturing (think of aerospace engineering and verifying the robustness of the systems of a satellite that then flies in orbit for twenty years).

What do we need in our industry? In IT systems (operations) it is important for us to be able to insert possible errors into the infrastructure:

- Planned – All operations and support functions must be aware of planned partial outages,

- In a controlled manner – I must not indiscriminately break too much of the infrastructure,

- With measurement – I need to be able to assess the impact of errors on service quality.

Netflix is considered a pioneer in the field, having introduced chaos engineering way back around 2011 and developed its own ChaosMonkey tool for targeted disruption of compute resources, network components and component states. The tool was soon published as open source.

ChaosMonkey was soon enhanced by the addition of Simian Army with a more sophisticated approach to introducing bugs, allowing for better tuning of the necessary system resilience. Chaos engineering has been gradually introduced by all major service companies such as Google, Microsoft, Facebook/Meta and others.

However, this does not mean that it is a discipline for large companies with CDNs (content delivery networks) and large-scale services. Fault injection should gradually become part of the processes in all IT companies providing high-availability services. Anyone can easily try testing on their existing environment using the tools described below.

Tool support

In a short time, several interesting instruments with varying degrees of detail were created. Breaking is traditionally a simple activity. This includes shutdowns, component restarts, artificial load generation and reconfigurations.

The complex part is the control and monitoring part, where I need to see the behavior of applications and components that are intentionally affected by the error. After ChaosMonkey tools like Gremlin, ChaosMesh, ChaosBlade, Litmus and others appeared. For example, the well-known Istio has support for fault injection.

Here, let’s describe Gremlin’s enhanced tool, the new native tools from major cloud providers AWS FIS and Azure Chaos Studio.

Gremlin

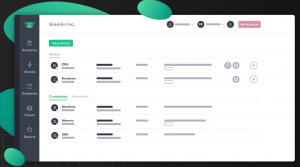

Gremlin (dating back to 2014) is a well-known online bug-insertion tool with agent support and connection to cloud services.

Its interface is simple, as is its use. The agent must be installed on each guest that is to be its target.

Basically, Gremlin allows attacks on resources in virtual machines or containers, including overloading, restarting or shutting them down, on databases or on networks. It can overload CPU, memory and I/O, for example, it can change system time or kill specific processes. Causes network communication to be dropped, causes network latency, or prevents access to DNS.

The scenarios allow you to combine attacks and monitor the status of affected target components. Imagine, for example, periodically verifying the correct function of scalesets by purposely overloading nodes in the set and monitoring whether new nodes turn on fast enough.

Gremlin supports Service Discovery within the target environment. It can detect system instability and stop attacks in time before they cause service disruption.

For testing within the same team, Gremlincan be used free of charge.

AWS Fault Injection Simulator (AWS FIS)

The new (2020) AWS FIS service testing EC2, ECS, EKS, RDS by shutting down or restarting machines and services is also part of Amazon’s portfolio. This is very similar fault injection logic, adapted to the AWS environment, including the ability to define tests as JSON documents. Thanks to the integration, you don’t need to use an agent for most of the actions you perform, except when you need to load resources.

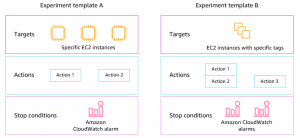

In AWS FIS , you create an action on a targetaccording to the available conditions. Action chaining allows you to define advanced scenarios. It is possible to set a condition under which the test will terminate (if I significantly disrupt the functionality of the environment). The whole concept allows integration into the CD pipeline.

The account under which the tests run, which must have access to the resources it operates, has fairly high privileges and needs to be accessed as such.

AWS FIS is paid per use for the number of actions performed.

Azure Chaos Studio

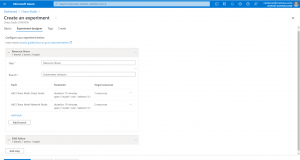

At the time of writing, Azure Chaos Studio for Microsoft Azure is in Preview and is therefore being provided for testing until April 2022 before it rolls out on a pay-per-use model.

The concept of the tool is similar to the previous ones, where on the one hand I create experiments in Chaos Studio, for example load experiments, and in Application Insights I continue to monitor the behavior of the resource. The errors I can generate are again CPU, virtual or physical memory loads, I/O, resource restarts, system time changes, changes and failures on container infrastructure, and more. The list is gradually expanding to include the most used cloud resources in Azure. The presence of an API will allow integrations with other third party products.

Let’s design systems better

The discipline of chaos engineering and controlled insertion of bugs into infrastructure is developing promisingly. With cloud services, we get our hands on extensive infrastructure and advanced tools. We can easily and safely model outages as they actually occur statistically (we can rely on provider numbers for component availability).

We match the resulting behavior of the infrastructure, which is under the pressure of managed outages, to the custom configuration of the infrastructure and software tools so that the resulting number matches the SLA for the application provided. With fault injection tools, we have the opportunity to stop designing robustness by looking out of the window, but to draw on tangible experience and the language of numbers.

When do you think the adoption of chaos engineering will happen in your company? Let me know in the article discussion if you would dare to take this approach today!

This is a machine translation. Please excuse any possible errors.