Containers in the cloud – how to use them in the cloud?

A little history: Containerisation technologies have been available since the late 1970s, but the massive expansion of containers began with the advent of Docker on Linux.

Docker

When Docker appeared in 2013, it immediately gained a lot of popularity, mainly due to its complete set of tools for creating, launching, managing, distributing and orchestrating containers. Docker is included in most Linux distributions, but it can also be used on other operating systems. You can use the Docker Hub public repository to publish and distribute containers.

Docker is still a major player in the container field, but recently significant competition has emerged in the form of more specialized tools like Podman, Buildah, Skopeoand others. Another change is thatContainerd, originally part of Docker, has become a separate project within the Cloud Native Computing Foundation. But Docker’s main competitive advantage is still the breadth and completeness of the tools it provides.

Container format standards are handled by the Open Container Initiative, a project under the Linux Foundation. However, Docker containers can also run on Windows Server (2016 or 2019).

What do we need these containers for?

You could say that containers are the next logical step in application distribution and management after the great proliferation of virtual machines (VMs) after 2000.

Computer virtualization has enabled significant simplification of application deployment and more efficient use of resources. But it also brought with it the need to run a large number of operating systems. The operating system is something that we need to run the application, but which in itself does not bring any direct benefit.

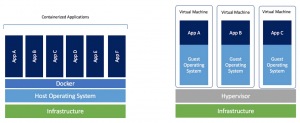

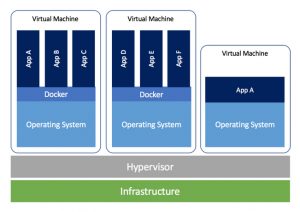

The VM disk image holds the files needed to run the application and the operating system. The container, on the other hand, contains only the files needed to run the application, not the operating system. We can see this in the following picture:

Let’s imagine that there are actually two physical servers. While applications A-F on the left run in containers on a single shared operating system, applications A-C on the right each have their own operating system. Comparing the two scenarios, the solution with containers on the left offers some significant advantages:

- The operating system is run only once, so it consumes about three times less CPU and RAM.

- The OS was installed only once, so we saved time (even though it may have been automated) and gigabytes of disk capacity.

- The size of the container disk image is dramatically (for example 100×) smaller than the size of the OS disk image with the application.

- Thanks to these savings, we are able to run more applications on the same hardware.

But even more important than savings on hardware resources are the other features of containers:

- Thanks to the small size of the container image, we are able to upload and run the container very quickly. Launching the container takes seconds (launching a VM with an application usually takes minutes). If, for example. the server fails, the application can be running on another one in a few seconds. If the number of requests for an application increases significantly, we can run its container on other servers very quickly, thus scaling performance.

- A well-designed container is a clear definition of what the application looks like and what dependencies it uses. We can be sure that every container launch leads to the same result because the launch is automatic.

- Containerized applications can be run on different platforms on-premises and in the cloud. Containers are very portable.

- Containers simplify some network issues (e.g. I can start a container without assigning another IP address).

- Containersintegrate well with development tools. The result of a well-prepared development line can be automatically created container images waiting impatiently to be run.

- The use of containers can also lead to licensing savings in some scenarios.

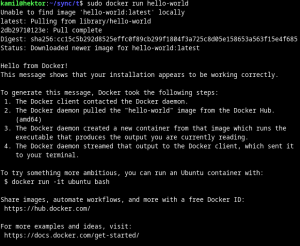

Probably the simplest container imaginable is called hello-world and is available for download on the Docker Hub. If you have Docker installed, you can run this container with a single command: docker run hello-world:

What exactly happened? Several activities took place:

- Docker has detected that the hello-world container image is not available locally (we would have found this out ourselves with docker images).

- Docker pulled the necessary container image from Docker Hub (docker pull).

- Docker created a new container instance from the downloaded image and ran it (docker run).

- The container is also terminated after the task in it is completed (the container instance is in the Exited state).

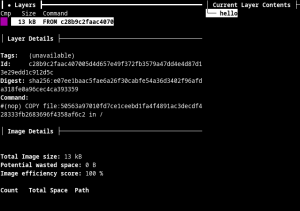

And what does the container image actually look like? It’s nothing complicated, it’s basically an archive of files and metadata, usually with multiple layers corresponding to the edits being made. For example, you can use docker image save or the dive tool to look inside. The hello-world container contains a single file called hello in addition to the metadata:

So I only need HW, OS and Docker (for example) to run containers? In theory, yes, but the typical deployment of containers in practice usually looks like running the containers in an OS running in a VM, thus taking advantage of the strengths of both technologies:

Container Orchestration

Container orchestration is the automation of certain activities related to the operation of containers. While these activities can theoretically be carried out manually, it is only by automating them that we can take full advantage of the benefits that containers bring. These activities include:

- Distribution of container images

- Central configuration, job scheduling

- Resource allocation, starting and stopping containers

- High availability (if one server fails, the application is still available)

- Horizontal scaling (application runs in multiple parallel instances)

- Network services (service discovery, IP addresses, ports, balancing, routing)

- Monitoring, health checks

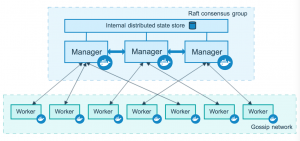

Swarm

Swarm is an orchestration tool that is distributed with Docker. It is therefore in a way the easiest way, if only because the configuration of Swarm is relatively simple. Cluster nodes are divided into two types – Manager and Worker:

Mirantis, the owner of Docker Enterprise, continues to support the development of Swarm, although it is now focusing more on Kubernetes in the cloud itself.

Kubernetes

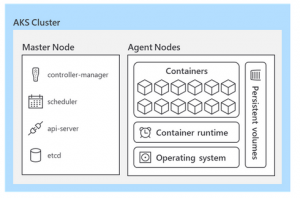

Kubernetes (also K8s) is currently the de facto standard for container orchestration. It was developed by Google (originally for its internal use, the first version was released in 2015). Kubernetes cluster uses two main types of nodes – Control plane and Worker node:

While Kubernetes is an open source project, commercial support is available from several major software companies – Red Hat, Rancher (SUSE), VMware and others.

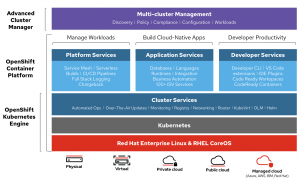

Red Hat OpenShift

OpenShiftis a Red Hat commercial product that includes the Container Platform and Advanced Cluster Management in addition to the Kubernetes Engine itself. The number of services included is so large that it would warrant a separate article.

The community open source variant of OpenShift (without some features) is called OKD.

How to containerize an application?

Almost any existing application (unless it somehow uses HW resources directly) can be turned into a container. However, it is much better if the application was developed from the beginning with the intention of running it as a container. This implies some features that such an application should ideally have:

- The application should be stateless, data should not be stored in the container itself (it should be in a separate storage – in a database, in a shared cache, on a shared disk, on a shared file system, etc.).

- The application should be able to be terminated at any time with impunity and should be easy and fast to run.

- The application should be able to run in parallel so that it can be scaled.

- The app shouldonly do one thing (the microservices concept).

- The application configuration should be passed usingenvironment variables (so the configuration is not part of the container).

But even containerization of legacy applications can make sense in a surprising number of cases – the benefits can be, for example:

- automation and acceleration of development and deployment,

- simplification of activities related to the operation of the application,

- reduction of HW intensity,

- easier deployment in the cloud,

- pressure to modernize applications in the organization.

How you create a container depends on the tool you use. A container image is usually created by taking an existing image and deriving a new image from it by adding application files and dependencies. In the case of Docker, for example. used a Dockerfile (to define the modifications to be made to the container) and the docker build command to create the image.

But there is often a much simpler way – to use an existing ready-made container image. In this case, I just need to start the container with the appropriate parameters, or connect the necessary volume.

Containers in the cloud

Finally, we come to the cloud: so how does the cloud relate to containers? If we have a container image ready, we are able to deploy and run it in the cloud relatively easily. In addition, the containerized application has features (mentioned above) that make it easier to operate in the cloud, regardless of the chosen cloud provider.

Which specific cloud services can we use today to run containers? Let’s take a look at the two largest cloud providers:

AWS (Amazon Web Services)

Amazon Web Services includes several services that allow you to run containerized applications, but they differ in deployment methods and features. We’ll describe the ECR registry, near serverless Fargate, standard ECS and EKS using Kubernetes, Lambdas, Elastic Beanstalk, and the ability to run the Red Hat OpenShiftplatform directly on AWS services.

ECR

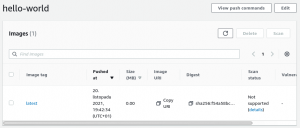

Elastic Container Registry is a highly available service that allows you to store and share container images. Access to containers is controlled by access rights. The entire container image lifecycle is managed – versioning, tagging, archiving. Containers stored in ECR can be used in other AWS services (see below) or simply distributed wherever they are needed:

Repositories created in ECR can be public or private, and images can be automatically or manually scanned for vulnerabilities and encrypted.

You pay for the data transferred, but a relatively large volume is free every month.

Fargate

Fargate is a very interesting AWS service that allows running „serverless“ containers. This means that I don’t have to decide on which server the container or containers will run on, I just define the required HW resources and OS. (The operating system is Amazon Linux 2 or Windows 2019.) AWS then somehow makes sure the container is running and has resources available.

The service is charged on the basis of CPU and RAM per hour, in the case of Windows there is also an OS license fee. Fargate cannot be used alone, but only in combination with ECS or EKS, where I select Fargate as the option to run the container.

ECS

In Elastic Container Service, Amazon has realized its vision of what a cloud service for running containers should look like. If we don’t have special requirements in terms of cluster management and don’t mind some ECS specificity, this is probably the easier way to go.

Cluster management (communication with agents on nodes, API, configuration storage) is handled by the ECS service itself, so we just need to configure it and don’t have to worry about where it runs. The ECS cluster worker nodes are then implemented as EC2 instances or using Fargate.

ECS is provided free of charge, but there is a charge for additional AWS resources used (e.g. EC2).

EKS

Elastic Kubernetes Service is a service primarily for those who want a Kubernetes-compatible cloud service. They can therefore continue to use the usual tools, e.g. kubectl.

The Kubernetes control plane, which would be relatively complex to configure yourself, is available as a highly available AWS service.

Worker nodes can then be implemented using:

- EC2 (managed node groups nebo self-managed nodes)

- Fargate

ECS is paid per hour and cluster, again you need to pay for the AWS resources used (e.g. EC2).

Lambda

Lambda is a service that allows you to run serverless code in the cloud. Usually it is used to call a simple specific function that responds to an event, but one of the ways to configure a lambda in AWS is to choose a container that will perform the desired function. For some more complicated things requiring additional integration libraries (which I can have ready in the container) this may be the way to go.

In the case of Lambda, you pay for the time and RAM used, and the first million runs are free every month.

Elastic Beanstalk

Elastic Beanstalk is a managed service that allows running web applications of various types, one of the variants is running an application created as a container.

There is no charge for Elastic Beanstalk, you pay for the resources used.

Red Hat OpenShift on AWS (ROSA)

If we want, we can also run the complete Red Hat OpenShift platform in the AWS cloud. This is done through Quick Start, which is the automatic deployment of all necessary resources in AWS according to templates (CloudFormation). The installation will deploy three control plane nodes and a variable number of worker nodes.

Charges apply for used AWS resources (e.g. EC2) and a Red Hat OpenShift subscription is required.

Microsoft Azure

Microsoft Azure also offers a number of services that allow containers to run. We’ll look at the ACR registry, Container Instances,AKS, App Function, App Service, and again the ability to run Red Hat OpenShift directly in Azure.

Azure Container Registry

Like AWS ECR, Azure Container Registries enable the storage and distribution of container images.

Azure Container Instances

Azure Container Instances allow you to run both Linux and Windows containers without having to set up virtual servers, similar to AWS Fargate. However, Azure Container Instances also allow you to deploy containers directly based on defined rules.

CPU and RAM are charged per time unit, in the case of a Windows container the OS license is added.

AKS

Azure Kubernetes Service is a service that provides Kubernetes clusters in Azure, similar to EKS from AWS.

There is no charge for AKS clusters, only for additional Azure resources consumed.

Azure Functions

Azure Functions are similar to AWS Lambda and also allow you to run serverless code in container form. You pay for time and RAM used, and there are a relatively large number of free requests each month.

Azure App Service

App Service is a service that enables the operation of web applications of various types, including containerized ones. The service is charged according to the allocated hardware resources.

Azure Red Hat OpenShift

Similar to AWS, it is possible to deploy a complete managed OpenShift infrastructure in Azure, which is operated in the cloud by Microsoft in collaboration with Red Hat.

Containers in brief

Containers are a technology that has reached a sufficient level of maturity and that has unquestionable benefits for the development and operation of applications – in private datacenters and in the cloud. There are many options available to us to operate containers efficiently, some of which we have presented today.

The next logical step is a serverless architecture, which you can read about here. However, other articles fromthe Cloud Encyclopedia are certainly worth your attention.